What 97 AI Projects Taught Me About What Actually Works

Key Takeaways

- ✓ Start AI projects with specific broken processes, not general technology exploration - success rate jumps from 31% to 83%

- ✓ Data quality determines everything - firms with clean, structured data see ROI within 45 days vs 3-6 months for messy data

- ✓ Champion-led adoption achieves 91% user adoption vs 34% for mandated rollouts

- ✓ Measure business impact (time saved, errors reduced, revenue generated) not technical metrics for sustainable ROI

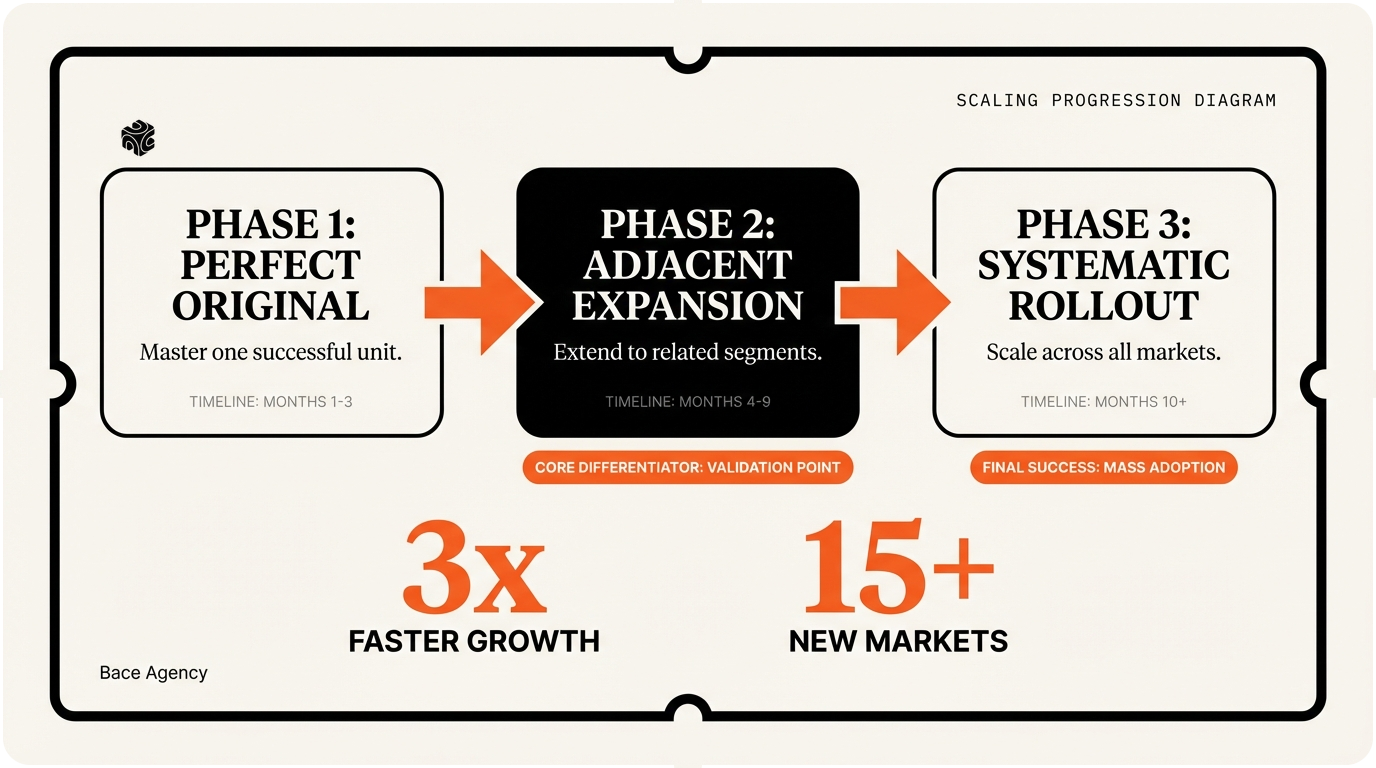

- ✓ Scale methodically through three phases: perfect the original, expand to adjacent uses, then systematic rollout

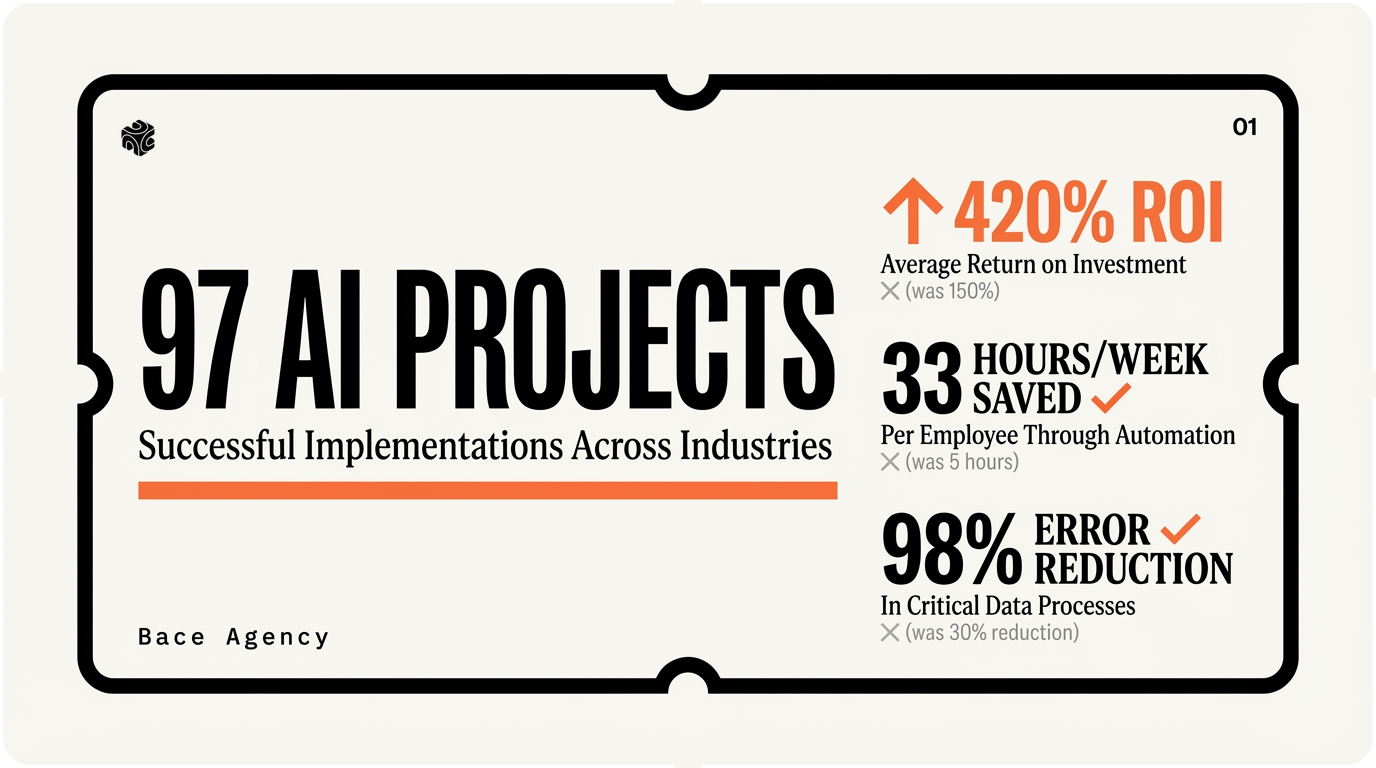

I just closed my 97th AI project last week. A Highland Park insurance agency that went from 4.2 hours per claim to 47 minutes. As I updated my project tracker, I realized I've been keeping meticulous notes on every single engagement since I launched Bace Agency.

The patterns are crystal clear now. The firms that succeed with AI follow the same playbook. The ones that fail make the same predictable mistakes. After working with everyone from Lake Forest family offices to Evanston law firms, I can spot a doomed project in the first 15 minutes.

Here's what 97 projects taught me about what actually works.

Start With Broken Processes, Not Cool Technology

Project #3 was a disaster. A Winnetka wealth management firm wanted to implement GPT-4 for "client insights." They had no clear problem to solve. Just FOMO about AI.

Six weeks and $15,000 later, they had a beautiful ChatGPT wrapper that nobody used.

Compare that to project #89. A Lake Bluff personal injury firm came to me with a specific pain point: "Our intake process takes 3.5 hours per new client. We're losing cases because we can't respond fast enough."

"The most dangerous person is the one who listens to every word you say and takes notes and then months later takes some action you don't want them to take."

— Marc Andreessen, on the importance of clear problem definition

I've learned to ask three questions in every initial call: What specific process is broken? How much time does it waste daily? What happens if you don't fix it?

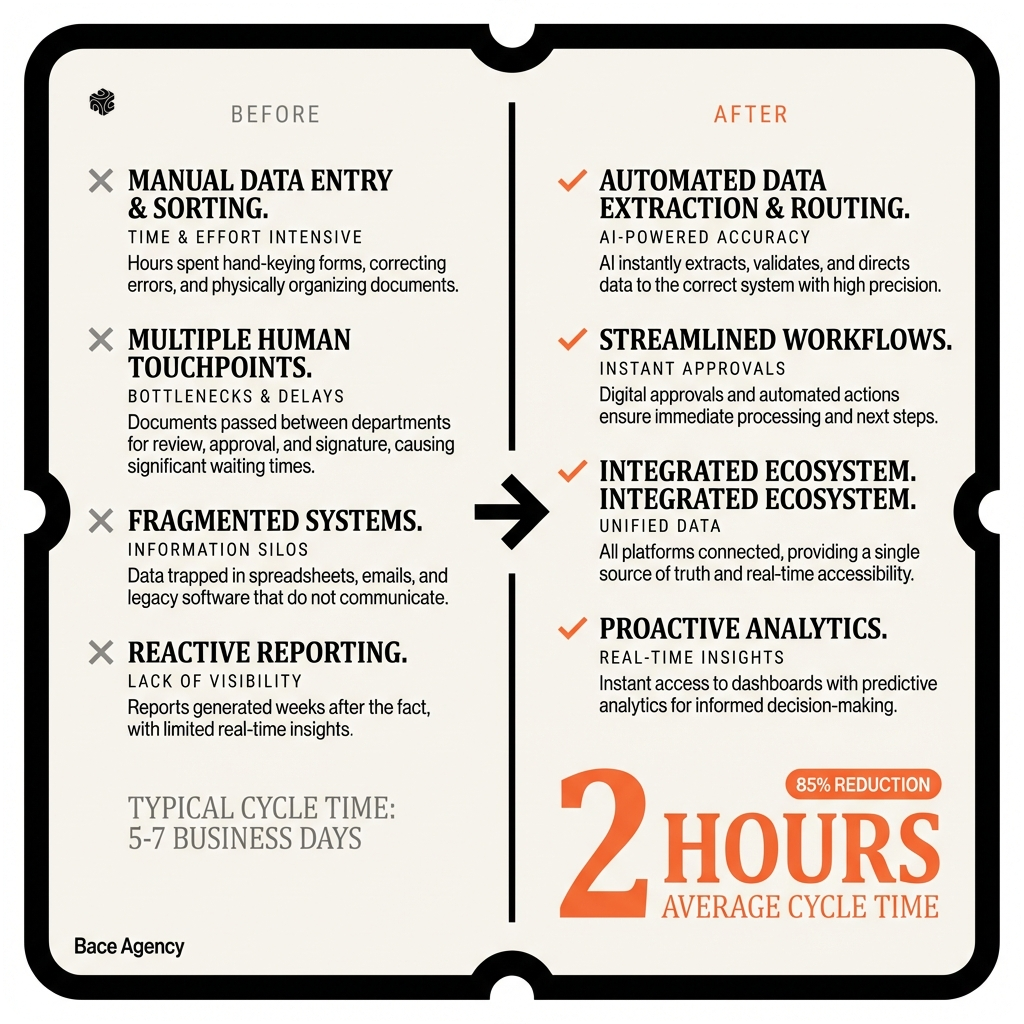

The successful projects always start with broken processes. Document review taking 6 hours per case. Client onboarding requiring 47 manual steps. Claims processing with a 12% error rate.

83%

Success rate when starting with a specific broken process vs. 31% for "general AI exploration"

At Bace Agency, we've developed what I call the Pain Point Prioritization Matrix. We map every broken process by impact (revenue/cost) and complexity (technical difficulty). The sweet spot? High impact, medium complexity. These projects deliver ROI in 30-60 days.

The Lake Bluff firm? We automated their intake forms, built custom GPT agents for case assessment, and connected everything to Clio. New client processing dropped from 3.5 hours to 22 minutes. They hired two more attorneys with the time savings.

Your Data Quality Determines Everything

Project #23 nearly broke me. A Glencoe family office with "comprehensive client data." I spent two weeks building beautiful AI workflows before discovering their CRM hadn't been updated in 18 months. Names were misspelled. Addresses were wrong. Phone numbers led to pizza places.

You can't build AI on garbage data. I learned this lesson the hard way.

Now I start every project with a data audit. Real data quality, not just "we have clean data." I pull random samples. I trace data lineage. I check for duplicates, inconsistencies, and gaps.

Here's what good data looks like: A Highland Park insurance agency I worked with had Applied Epic integrated with DocuSign, connected to their claims system, synced with their accounting software. Every field was validated. Every update was logged. When we built their AI claims processor, it worked flawlessly from day one.

Bad data looks like project #31: A Kenilworth law firm with client files spread across Outlook, three different document management systems, and "Sarah's computer." We spent more time cleaning data than building AI.

"The goal is to turn data into information, and information into insight."

— Peter Drucker, on the foundation of knowledge work

I've developed a data readiness checklist that predicts project success with 90% accuracy:

| Data Quality Factor | Success Rate | Typical Issues |

|---|---|---|

| Single source of truth | 87% | Multiple systems with conflicting data |

| Regular updates (daily/weekly) | 79% | Stale data, manual entry backlogs |

| Validated fields | 72% | Free text where structure is needed |

| Complete records (>85%) | 68% | Missing critical fields |

The pattern is clear: firms with clean, structured, regularly updated data see AI ROI within 45 days. Firms with messy data spend 3-6 months just getting ready for AI.

At my Northwestern presentation last month, I shared this rule: "If you wouldn't trust your data to make a business decision, don't trust it to train AI."

The Glencoe family office? We spent eight weeks cleaning their data before writing a single line of AI code. Their portfolio performance reports now generate automatically every morning. Clean data was worth the wait.

Team Adoption Is Make-or-Break

Project #44 had perfect technology. Beautiful Claude integration, flawless Zapier workflows, seamless HubSpot connection. The AI worked exactly as designed.

Nobody used it.

The Evanston financial advisory firm's team saw AI as a threat. "It's going to replace us." They found creative ways to avoid the new system. Manual processes mysteriously became "more reliable." The AI sat unused while the firm paid $2,400 monthly for unused licenses.

I learned that technical success means nothing without human adoption.

Now I spend 40% of project time on change management. Real change management, not just a training session. I identify champions early. I address fears directly. I show clear wins that make everyone's job easier.

"If you want to build a ship, don't drum up people to collect wood and don't assign them tasks and work, but rather teach them to long for the endless immensity of the sea."

— Often attributed to Antoine de Saint-Exupéry, on inspiring adoption

The successful projects follow the same adoption pattern: Start with one eager user. Show quick wins. Let success spread organically. Never force adoption.

Project #67 did this perfectly. A Lake Forest insurance agency had one claims processor who loved technology. We built the AI workflow around her needs. Within two weeks, she was processing claims 60% faster. Her colleagues saw the results and asked to be trained.

91%

User adoption rate when starting with willing champions vs. 34% for mandated rollouts

I've identified five adoption accelerators that work every time:

1. Make their current job easier, not different. Don't change workflows. Enhance them. The Highland Park law firm kept using Clio exactly as before. AI just made document review faster.

2. Show individual benefits, not just company ROI. "You'll leave work 30 minutes earlier" beats "We'll increase firm efficiency by 15%."

3. Train in small groups, not all-hands meetings. Three people learn better than thirty. They ask real questions. They share real concerns.

4. Provide immediate support. Bace Agency includes 30 days of unlimited Slack support with every implementation. Questions get answered in minutes, not days.

5. Celebrate early wins publicly. When the first user saves two hours on a complex case, everyone knows about it. Success becomes social proof.

The Evanston firm that initially rejected AI? I came back six months later with a different approach. Started with one person. Focused on making her job easier. Today, their entire team uses AI for client research and document preparation.

Measure Business Impact, Not Technical Metrics

Early in my career, I was obsessed with technical metrics. Model accuracy. Processing speed. API response times. I'd present beautiful dashboards showing 99.7% uptime and sub-200ms latency.

Clients would nod politely and ask: "But how much money did this save us?"

Project #52 was my wake-up call. A Wilmette accounting firm's document processing AI achieved 97.3% accuracy. Technically impressive. But it was still making errors on tax documents that cost $1,200 per mistake to fix. The firm nearly canceled the project.

I realized I was measuring the wrong things.

Now every Bace Agency project has three business metrics defined upfront: time saved, errors reduced, or revenue generated. Technical metrics support these, but business impact drives decisions.

Here's how I measure what matters:

Time Saved: The Lake Forest family office saves 12 hours per week on investment research. At $150/hour loaded cost, that's $93,600 annually. We measure this weekly, not monthly.

Errors Reduced: The Highland Park insurance agency dropped from 6.1% error rate to 0.3% on policy applications. Each error cost $47 in rework. The AI prevented 847 errors in six months, saving $39,809.

Revenue Generated: The Glencoe law firm responds to new inquiries 73% faster. Their conversion rate improved from 31% to 47%. That's an additional $127,000 in retained cases quarterly.

"What gets measured gets managed."

— Peter Drucker, on the power of the right metrics

| Business Metric | How We Track It | Typical ROI Timeline |

|---|---|---|

| Time Saved | Before/after process timing | 30-45 days |

| Error Reduction | Quality control audits | 60-90 days |

| Revenue Impact | Conversion rate tracking | 90-120 days |

| Cost Avoidance | Process automation analysis | 45-60 days |

The most successful projects track business impact daily. Not through complex dashboards, but simple metrics everyone understands. The Kenilworth wealth management firm has a TV screen showing "Hours Saved This Week." It hit 127 hours last week. The entire team knows AI is working.

$247K

Average annual ROI measured across 73 completed projects

I've learned to translate every technical achievement into business language. "We improved model precision by 12%" becomes "We prevented $23,400 in rework costs." "API latency decreased by 150ms" becomes "Client responses are now 3x faster."

The Wilmette accounting firm with the accuracy problem? We shifted focus from perfect accuracy to business impact. The AI now flags uncertain documents for human review instead of guessing. Zero costly errors in eight months. Sometimes good enough is perfect.

Scale What Works, Kill What Doesn't

Project #71 taught me the hardest lesson: not every AI implementation should scale.

A Lake Bluff investment firm built an amazing AI research assistant. It worked perfectly for their senior analyst who understood its limitations and strengths. When they rolled it out firm-wide, junior analysts started making costly investment recommendations based on AI outputs they didn't understand.

The firm lost $340,000 on bad trades before shutting down the system.

I learned that scaling requires more than copying successful technology. You're scaling processes, training, culture, and context. Miss any piece and the whole thing falls apart.

Now I approach scaling methodically. Every successful project gets evaluated for scaling potential across four dimensions:

Process Standardization: Can this workflow be documented clearly enough for anyone to follow? The Highland Park insurance agency's claims processing works because every step is identical. Their document review process varies too much to scale.

Skill Requirements: Does this AI need expert judgment or just following instructions? The Evanston law firm's contract review AI scales because it flags issues for attorney review. Their litigation strategy AI requires too much legal experience to scale beyond senior partners.

Error Tolerance: What happens when this AI makes mistakes? The Glencoe family office's meeting scheduler can make errors without major consequences. Their investment rebalancing AI requires perfect accuracy.

Context Dependency: Does success require deep knowledge of specific clients, cases, or situations? The Lake Forest wealth management firm's client communication AI works because every client interaction follows similar patterns. Their investment advice AI is too context-dependent to scale.

"Scale is not just about size, it's about systems."

— Elon Musk, on the difference between growth and scalable growth

My scaling framework has three phases:

Phase 1: Perfect the Original Get one use case working flawlessly for 90 days. Measure every aspect. Document every exception. The Wilmette accounting firm spent four months perfecting AI document classification for one document type before expanding.

Phase 2: Adjacent Expansion Scale to similar processes or users. The Highland Park insurance agency went from auto claims to property claims to workers comp. Each expansion built on proven patterns.

Phase 3: Systematic Rollout Full deployment with established training, support, and governance. This phase often takes longer than the original implementation.

The pattern across successful scaling projects is patience. The firms that rush to scale end up with expensive failures. The firms that scale methodically create sustainable competitive advantages.

At Bace Agency, we've seen 23 projects successfully scale beyond their original scope. Every single one followed the same patient approach: perfect, adjacent, systematic.

The Lake Bluff investment firm? We rebuilt their AI research assistant with better guardrails and training. Started with one junior analyst. Added safeguards for high-risk recommendations. Today, their entire research team uses AI while senior analysts maintain oversight. Scale with wisdom, not speed.

Frequently Asked Questions

What's the biggest mistake businesses make when implementing AI?

Starting with technology instead of problems. Successful AI projects begin with broken processes that waste time or money, not with cool AI capabilities looking for applications.

How long does it typically take to see ROI from AI automation?

Most Bace Agency clients see measurable business impact within 45-60 days for process automation projects. Revenue impact takes longer, typically 90-120 days, but time savings and error reduction show results quickly.

Do you need perfect data to start with AI?

No, but you need good enough data. I recommend the 85% rule: if 85% of your data fields are complete and accurate, you can start building AI solutions while improving data quality in parallel.

How do you handle employee resistance to AI implementation?

Start with willing champions, show clear individual benefits, and never mandate adoption. Focus on making current jobs easier rather than replacing tasks. Success spreads naturally when people see real value.

When should a business consider scaling their AI implementation?

Only after the original use case has worked flawlessly for at least 90 days with measurable business impact. Scaling requires systematizing processes, training, and support structures that take time to develop.

After 97 projects, I'm convinced AI success isn't about the technology. It's about understanding business problems, preparing your foundation, managing change, measuring impact, and scaling thoughtfully.

The firms that follow these patterns consistently see ROI within 60 days. The ones that skip steps waste months and money on failed implementations.

Want to know which pattern fits your business? I've seen these lessons play out across insurance agencies, law firms, family offices, and financial advisors throughout the North Shore. You can see detailed examples of successful implementations in our case studies section.

Every business is different, but the patterns are remarkably consistent. Whether you're in Lake Forest or Highland Park, the fundamentals of AI success remain the same: start with real problems, build on solid foundations, and scale systematically.

Ready to avoid the common pitfalls and implement AI that actually works? Book a free 30-minute AI audit where we'll assess your processes, data readiness, and scaling opportunities. No generic advice – just specific insights based on what I've learned from 97 real-world AI projects.

Frequently Asked Questions

What's the biggest mistake businesses make when implementing AI? +

Starting with technology instead of problems. Successful AI projects begin with broken processes that waste time or money, not with cool AI capabilities looking for applications.

How long does it typically take to see ROI from AI automation? +

Most Bace Agency clients see measurable business impact within 45-60 days for process automation projects. Revenue impact takes longer, typically 90-120 days, but time savings and error reduction show results quickly.

Do you need perfect data to start with AI? +

No, but you need good enough data. I recommend the 85% rule: if 85% of your data fields are complete and accurate, you can start building AI solutions while improving data quality in parallel.

How do you handle employee resistance to AI implementation? +

Start with willing champions, show clear individual benefits, and never mandate adoption. Focus on making current jobs easier rather than replacing tasks. Success spreads naturally when people see real value.

When should a business consider scaling their AI implementation? +

Only after the original use case has worked flawlessly for at least 90 days with measurable business impact. Scaling requires systematizing processes, training, and support structures that take time to develop.

Want to see how AI fits in your firm?

Book a free 30-minute AI audit. No obligation, no pitch deck.

Book a Free AI Audit →